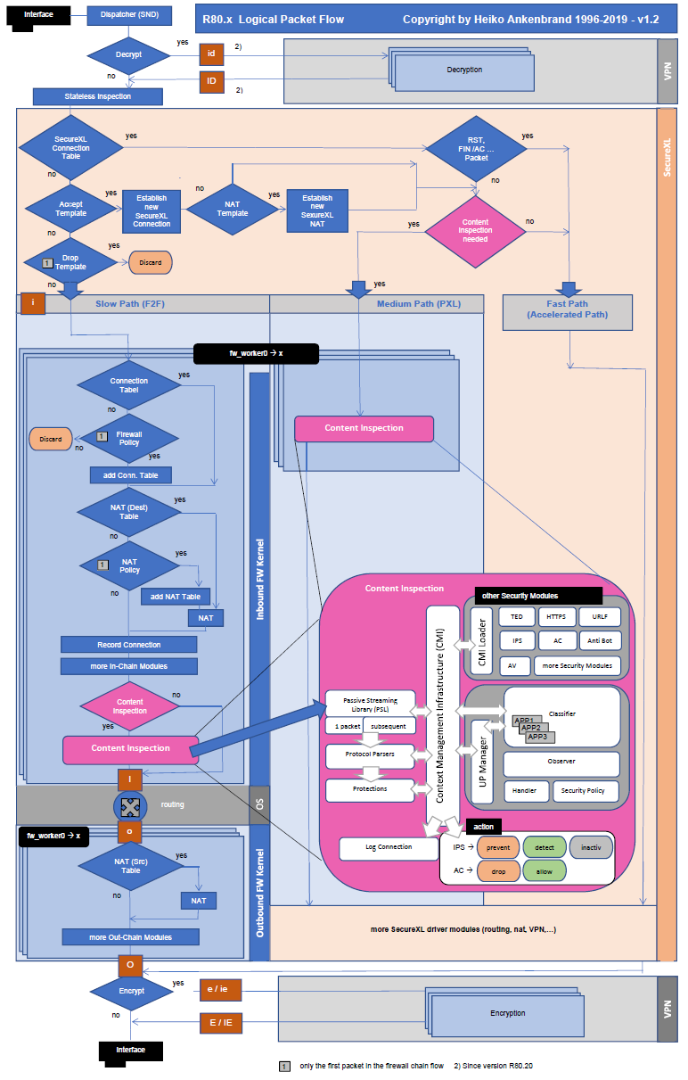

Logical Packet Flow

R80.x Security Gateway Architecture (Logical Packet Flow)

| Introduction |

|---|

This document describes the packet flow (partly also connection flows) in a Check Point R80.10 and above with SecureXL and CoreXL, Content Inspection, Stateful inspection, network and port address translation (NAT), MultiCore Virtual Private Network (VPN) functions and forwarding are applied per-packet on the inbound and outbound interfaces of the device. There should be an overview of the basic technologies of a Check Point Firewall. We have also reworked the document several times with Check Point, so that it is now finally available.

| What’s new in R80.10 and above |

|---|

R80.10 and above offer many technical innovations regarding R77. I will look at the following in this article:

– new fw monitor inspection points for VPN (e and E)

– new MultiCore VPN

– UP Manager

– Content Awareness (CTNT)

R80.20 and above:

– SecureXL has been significantly revised in R80.20. It now works in user space. This has also led to some changes in “fw monitor”

– There are new fw monitor chain (SecureXL) objects that do not run in the virtual machine.

– Now SecureXL works in user space. The SecureXL driver takes a certain amount of kernel memory per core and that was adding up to more kernel memory than Intel/Linux was allowing.

– SecureXL supportes now Async SecureXL with Falcon cards

– That’s new in acceleration high level architecture (SecureXL on Acceleration Card): Streaming over SecureXL, Lite Parsers, Scalable SecureXL, Acceleration stickiness

– Policy push acceleration on Falcon cards

– Falcon cards for: Low Latency, High Connections Rate, SSL Boost, Deep Inspection Acceleration, Modular Connectivity, Multible Acceleration modules

– Falcon card compatible with 5900, 15000 & 23000 Appliance Series > 1G (8×1 GbE), 10G (4×10 GbE) and 40G (2×40 GbE)

| Flowchart |

|---|

| VPN |

|---|

Decrypting a packet:

R80.10 and R80.20 introduced MultiCore support (it is new in R80 and above) for IPsec VPN. An IPSec packet enters the Security Gateway. The decrypted original packet is forwarded to the connection CoreXL FW instance for FireWall inspection at Pre-Inbound chain “i” from SND. The decrypted inspected packet is sent to the OS Kernel.

Encrypting a packet:

Encryption information is prepared at Post-Outbound chain “O”. The vpnk module on the tunnel CoreXL FW instance gets the packet before encryption at chain “e”. The encryption packet is forwarded to the connection CoreXL FW instance for FireWall from SND. The packet is encrypted by vpnk module at chain “E”. Afterwards the IPsec packet is sent out on interface. This fw monitor inspection points “e” and “E” are new in R80.10 and “oe” and “OE” are new in R80.20.

Note: It’s true, they only exist on the outbound side for encrypting packets not for decrypting packets on inbound side.

Note:

F2V – Describes VPN Connections via the F2F path.

| Firewall Core |

|---|

Inbound Stateless Check:

The firewall does preliminary “stateless” checks that do not require context in order to decide whether to accept a packet or not. For instance we check that the packet is a valid packet and if the header is compliant with RFC standards.

Anti-Spoofing:

Anti-Spoofing verifies that the source IP of each packet matches the interface, on which it was encountered. On internal interfaces we only allow packets whose source IP is within the user-defined network topology. On the external interface we allow all source IPs except for ones that belong to internal networks.

Connection Setup:

A core component of the Check Point R80.x Threat Prevention gateway is the stateful inspection firewall. A stateful firewall tracks the state of network connections in memory to identify other packets belonging to the same connection and to dynamically open connections that belong to the same session. Allowing FTP data connections using the information in the control connection is one such example. Using Check Point INSPECT code the firewall is able to dynamically recognize that the FTP control connection is opening a separate data connection to transfer data. When the client requests that the server generate the back-connection (an FTP PORT command), INSPECT code extracts the port number from the request. Both client and server IP addresses and both port numbers are recorded in an FTP-data pending request list. When the FTP data connection is attempted, the firewall examines the list and verifies that the attempt is in response to a valid request. The list of connections is maintained dynamically, so that only the required FTP ports are opened.

| SecureXL |

|---|

SecureXL is a software acceleration product installed on Security Gateways. Performance Pack uses SecureXL technology and other innovative network acceleration techniques to deliver wire-speed performance for Security Gateways. SecureXL is implemented either in software or in hardware:

- SAM cards on Check Point 21000 appliances

- ADP cards on IP Series appliances

- Falcon cards (new in R80.20) on different appliances

The SecureXL device minimizes the connections that are processed by the INSPECT driver. SecureXL accelerates connections on two ways.

New in R80.10:

In R80.10 SecureXL adds support for Domain Objects, Dynamic Objects and Time Objects. CoreXL accelerates VPN traffic by distributing Next Generation Threat Prevention inspection across multiple cores.

New in R80.20:

SecureXL was significantly revised in R80.20. It no longer works in Linux kernel mode but now in user space. In kernel mode resources (for example memory) are very limited. This has the advantage that more resources can be used in user space.

The SecureXL driver takes a certain amount of kernel memory per core and that was adding up to more kernel memory than Intel/Linux was allowing. On the 23900 in particular, we could not leverage all the processor cores due to this limitation. By moving all or most of SecureXL to user space, it’s possible to leverage more processor cores as the firewall can entirely run in user space.It still doesn’t by default in R80.20 in non-VSX mode, but it can be enabled.

It also means certain kinds of low-level packet processing that could not easily be done in SecureXL because it was being done in the kernel now can. For VSX in particular, it means you can now configure the penalty box features on a per-VS basis. It also improves session establishment rates on the higher-end appliances.

In addition, the following functions have been integrated in R80.20 SecureXL:

- SecureXL on Acceleration Cards (AC)

- Streaming over SecureXL

- Lite Parsers

- Async SecureXL

- Scalable SecureXL

- Acceleration stickiness

- Policy push acceleration

Throughput Acceleration – The first packets of a new TCP connection require more processing when processed by the firewall module. If the connection is eligible for acceleration, after minimal security processing the packet is offloaded to the SecureXL device associated with the proper egress interface. Subsequent packets of the connection can be processed on the accelerated path and directly sent from the inbound to the outbound interface via the SecureXL device.

Connection Rate Acceleration SecureXL also improves the rate of new connections (connections per second) and the connection setup/teardown rate (sessions per second). To accelerate the rate of new connections, connections that do not match a specified 5 tuple are still processed by SecureXL. For example, if the source port is masked and only the other 4 tuple attributes require a match. When a connection is processed on the accelerated path, SecureXL creates a template of that connection that does not include the source port tuple. A new connection that matches the other 4 tuples is processed on the accelerated path because it matches the template. The firewall module does not inspect the new connection, increasing firewall connection rates.

SecureXL and the firewall module keep their own state tables and communicate updates to each other.

- Connection notification – SecureXL passes the relevant information about accelerated connections that match an accept template.

- Connection offload – Firewall kernel passes the relevant information about the connection from firewall connections table to SecureXL connections table.

In addition to accept templates the SecureXL device is also able to apply drop templates which are derived from security rules where the action is drop. In addition to firewall security policy enforcement, SecureXL also accelerates NAT, and IPsec VPN traffic.

QXL – Technology name for combination of SecureXL and QoS (R77.10 and above).This has no direct association with PXL. It is used exclusively for QoS. But also here it is possible to use the QoS path in combination with PSL.

SAM card and Falcon card (R80.20 and above) – Security Acceleration Module card. Connections that use SAM/Falcon card, are accelerated by SecureXL and are processed by the SAM/Falcon card’s CPU instead of the main CPU (refer to 21000 Appliance Security Acceleration Module Getting Started Guide)).

SecureXL use the following templates:

If templating is used under SecureXL, the templates are created when the firewall ruleset is installed.

Accept Template – Feature that accelerates the speed, at which a connection is established by matching a new connection to a set of attributes. When a new connection matches the Accept Template, subsequent connections are established without performing a rule match and therefore are accelerated. Accept Templates are generated from active connections according to policy rules. Currently, Accept Template acceleration is performed only on connections with the same destination port (using wildcards for source ports).

Accept Tamplate is enabled by default if SecureXL is used.

Drop Template – Feature that accelerates the speed, at which a connection is dropped by matching a new connection to a set of attributes. When a new connection matches the Drop Template, subsequent connections are dropped without performing a rule match and therefore are accelerated. Currently, Drop Template acceleration is performed only on connections with the same destination port (does not use wildcards for source ports).

Drop Template is disabled by default if SecureXL is used. It can be activated via smart Dashboard and does not require a reboot of the firewall.

NAT Templates – Using SecureXL Templates for NAT traffic is critical to achieve high session rate for NAT. SecureXL Templates are supported for Static NAT and Hide NAT using the existing SecureXL Templates mechanism. Normally the first packet would use the F2F path. However, if SecureXL is used, the first packet will not be forwarded to the F2F path if Accept Tamplate and NAT Template match. Enabling or disabling of NAT Templates requires a firewall reboot.

R80.10 and lower: NAT Template is disabled by default.

R80.20 and above: NAT Template is enabled by design.

SecureXL path:

Fast path (Accelerated Path) – Packet flow when the packet is completely handled by the SecureXL device. It is processed and forwarded to the network.

Note: In many discusions and images, the SXL path is marked with the “accelerated path”. This also happened to me by mistake in this flowchart.

Medium path (PXL) – The CoreXL layer passes the packet to one of the CoreXL FW instances to perform the processing (even when CoreXL is disabled, the CoreXL infrastructure is used by SecureXL device to send the packet to the single FW instance that still functions). When Medium Path is available, TCP handshake is fully accelerated with SecureXL. Rulebase match is achieved for the first packet through an existing connection acceleration template. SYN-ACK and ACK packets are also fully accelerated. However, once data starts flowing, to stream it for Content Inspection, the packets will be now handled by a FWK instance. Any packets containing data will be sent to FWK for data extraction to build the data stream. RST, FIN and FIN-ACK packets once again are only handled by SecureXL as they do not contain any data that needs to be streamed. This path is available only when CoreXL is enabled.

Packet flow when the packet is handled by the SecureXL device, except for:

- IPS (some protections)

- VPN (in some configurations)

- Application Control

- Content Awareness

- Anti-Virus

- Anti-Bot

- HTTPS Inspection

- Proxy mode

- Mobile Access

- VoIP

- Web Portals.

PXL vs. PSLXL – Technology name for combination of SecureXL and PSL. PXL was renamed to PSLXL in R80.20. This is from my point of view the politically correct better term.

Medium path (CPASXL) – Now also CPAS use the SecureXL path in R80.20. CPAS works through the F2F path in R80.10 and R77.30. Now CPASXL is offered in SecureXL path in R80.20. This should lead to a higher performance. Check Point Active Streaming active streaming allow the changing of data and play the role of “man in the middle”. Several protocols uses CPAS, for example: Client Authentication, VoIP (SIP, Skinny/SCCP, H.323, etc.), Data Leak Prevention (DLP) blade, Security Servers processes, etc. I think it’s not to be underestimated in tuning.

Slow path or Firewall path (F2F) – Packet flow when the SecureXL device is unable to process the packet. The packet is passed on to the CoreXL layer and then to one of the Core FW instances for full processing. This path also processes all packets when SecureXL is disabled.

New in R80.20:

Inline Streaming path, Medium Streaming path, Host path and Buffer path – Are new SecureXL paths used in conjunction with Falcon cards. They are described in more detail in the following article “R80.x Security Gateway Architecture (Acceleration Card Offloading) “.

Note to Falcon Cards: Theoretically and practically there are even more than these three paths. This has to do with the offloading of SAM and Falcon cards (new in R80.20) and with QXL (Quality of Service) and other SecureXL technologies. It’s beyond the scope of this one.

| SecureXL chain modules (new in R80.20 and above) |

|---|

SecureXL has been significantly revised in R80.20. It now works in user space. This has also led to some changes in “fw monitor”

There are new fw monitor chain (SecureXL) objects that do not run in the virtual machine.

The new fw monitor chain modules (SecureXL) do not run in the virtual machine (vm).

SecureXL inbound (sxl_in) > Packet received in SecureXL from network

SecureXL inbound CT (sxl_ct) > Accelerated packets moved from inbound to outbound processing (post routing)

SecureXL outbound (sxl_out) > Accelerated packet starts outbound processing

SecureXL deliver (sxl_deliver) > SecureXL transmits accelerated packet

There are more new chain modules in R80.20

vpn before offload (vpn_in) > FW inbound preparing the tunnel for offloading the packet (along with the connection)

fw offload inbound (offload_in) > FW inbound that perform the offload

fw post VM inbound (post_vm) > Packet was not offloaded (slow path) – continue processing in FW inbound

| CoreXL |

|---|

CoreXL is a performance-enhancing technology for Security Gateways on multi-CPU-core processing platforms. CoreXL enhances Security Gateway performance by enabling the processing CPU cores to concurrently perform multiple tasks. CoreXL provides almost linear scalability of performance, according to the number of processing CPU cores on a single machine. The increase in performance is achieved without requiring any changes to management or to network topology.

On a Security Gateway with CoreXL enabled, the Firewall kernel is replicated multiple times. Each replicated copy, or FW instance, runs on one processing CPU core. These FW instances handle traffic concurrently, and each FW instance is a complete and independent FW inspection kernel. When CoreXL is enabled, all the FW kernel instances in the Security Gateway process traffic through the same interfaces and apply the same security policy.

R80.20 CoreXL does not support these Check Point features: Overlapping NAT, VPN Traditional Mode, 6in4 traffic – this traffic is always processed by the global CoreXL FW instance #0 (fw_worker_0) and more (see CoreXL Known Limitations).

Secure Network Distributor (SND) – Traffic entering network interface cards (NICs) is directed to a processing CPU core running the SND, which is responsible for:

- Processing incoming traffic from the network interfaces

- Securely accelerating authorized packets (if SecureXL is enabled)

- Distributing non-accelerated packets among Firewall kernel instances (SND maintains global dispatching table – which connection was assigned to which instance)

SND does not really touch any packet. The decision to stick to a particular FWK core is done at the first packet of connection on a very high level before anything else. Depending on SXL settings and in most of the cases, SXL can be offloading decryption calculations. However, in some other cases, such as with Route-Based VPN, it is done by FWK.

Firewall Instance (fw_worker) – On a Security Gateway with CoreXL enabled, the Firewall kernel is replicated multiple times. Each replicated copy, or Firewall Instance, runs on one CPU processing core. These FW instances handle traffic concurrently, and each FW instance is a complete and independent Firewall inspection kernel. When CoreXL is enabled, all the Firewall kernel instances on the Security Gateway process traffic through the same interfaces and apply the same security policy.

Dynamic Dispatcher – Rather than statically assigning new connections to a CoreXL FW instance based on packet’s IP addresses and IP protocol (static hash function), the new dynamic assignment mechanism is based on the utilization of CPU cores, on which the CoreXL FW instances are running. The dynamic decision is made for first packets of connections, by assigning each of the CoreXL FW instances a rank, and selecting the CoreXL FW instance with the lowest rank. The rank

for each CoreXL FW instance is calculated according to its CPU utilization. The higher the CPU utilization, the higher the CoreXL FW instance’s rank is, hence this CoreXL FW instance is less likely to be selected by the CoreXL SND. The CoreXL Dynamic Dispatcher allows for better load distribution and helps mitigate connectivity issues during traffic “peaks”, as connections opened at a high rate that would have been assigned to the same CoreXL FW instance by a static decision, will now be distributed to several CoreXL FW instances.

Multi Queue – Network interfaces on a security gateway typically receive traffic at different throughputs; some are busier than others. At a low level, when a packet is received from the NIC, then a CPU core must be “interrupted” to the exclusion of all other processes, in order to receive the packet for processing. To avoid bottlenecks we allow multiple buffers, and therefore CPU cores, to be affined to an interface. Each affined buffer can “interrupt” its own CPU core allowing high volumes of inbound packets to be shared across multiple dispatchers.

When most of the traffic is accelerated by the SecureXL, the CPU load from the CoreXLSND instances can be very high, while the CPU load from the CoreXL FW instances can be very low. This is an inefficient utilization of CPU capacity. By default, the number of CPU cores allocated to CoreXL SND instances is limited by the number of network interfaces that handle the traffic. Because each interface has one traffic queue, only one CPU core can handle each traffic queue at a time. This means that each CoreXL SND instance can use only one CPU core at a time for each network interface. Check Point Multi-Queue lets you configure more than one traffic queue for each network interface. For each interface, you can use more than one CPU core (that runs CoreXL SND) for traffic acceleration. This balances the load efficiently between the CPU cores that run the CoreXL SND instances and the CPU cores that run CoreXL FW instances.

Priority Queues – In some situations a security gateway can be overwhelmed; in circumstances where traffic levels exceed the capabilities of the hardware, either legitimate traffic or from a DOS attack, it is vital that we can maintain management communications and continue to interact with dynamic routing neighbors. The Priority Queues functionality prioritizes control connections over data connections based on priority.

Affinity – Association of a particular network interface / FW kernel instance / daemon with a CPU core (either ‘Automatic’ (default), or ‘Manual’). The default CoreXL interface affinity setting for all interfaces is ‘Automatic’ when SecureXL is installed and enabled. If SecureXL is enabled – the default affinities of all interfaces are ‘Automatic’ – the affinity for each interface is automatically reset every 60 seconds, and balanced between available CPU cores based on the current load. If SecureXL is disabled – the default affinities of all interfaces are with available CPU cores – those CPU cores that are not running a CoreXL FW instance or not defined as the affinity for a daemon.

The association of a particular interface with a specific processing CPU core is called the interface’s affinity with that CPU core. This affinity causes the interface’s traffic to be directed to that CPU core and the CoreXL SND to run on that CPU core. The association of a particular CoreXL FW instance with a specific CPU core is called the CoreXL FW instance’s affinity with that CPU core. The association of a particular user space process with a specific CPU core is called the process’s affinity with that CPU core. The default affinity setting for all interfaces is Automatic. Automatic affinity means that if SecureXL is enabled, the affinity for each interface is reset periodically and balanced between the available CPU cores. If SecureXL is disabled, the default affinities of all interfaces are with one available CPU core. In both cases, all processing CPU cores that run a CoreXL FW instance, or defined as the affinity for another user space process, is considered unavailable, and the affinity for interfaces is not set to those CPU cores.

The default affinity setting for all interfaces is Automatic. Automatic affinity means that if SecureXL is enabled, the affinity for each interface is reset periodically and balanced between the available CPU cores. If SecureXL is disabled, the default affinities of all interfaces are with one available CPU core. In both cases, all processing CPU cores that run a CoreXL FW instance, or defined as the affinity for another user space process, is considered unavailable, and the affinity for interfaces is not set to those CPU cores.

Passive Streaming Library (PSL) – IPS infrastructure, which transparently listens to TCP traffic as network packets, and rebuilds the TCP stream out of these packets. Passive Streaming can listen to all TCP traffic, but process only the data packets, which belong to a previously registered connection.

PXL – Technology name for combination of SecureXL and PSL.

The maximal number of possible CoreXL IPv4 FW instances:

| Version | Check Point Appliance | Open Server |

| R80.10 (Gaia 32-bit) | 16 | 16 |

| R80.10 (Gaia 64-bit) | 40 | 40 |

| R77.30 (Gaia 32-bit) | 16 | 16 |

| R77.30 (Gaia 64-bit) | 32 | 32 |

| FW Monitor Inspection Points |

|---|

There are six fw monitor inspection points when a packet passes through a R80.x Security Gateway:

| Inspection point | Name of fw monitor inspection point | Relation to firewall VM | Available since version |

|---|---|---|---|

| i | Pre-Inbound | Before the inbound FireWall VM | always |

| I | Post-Inbound | After the inbound FireWall VM | always |

| id | Pre-Inbound VPN | Inbound before decrypt | R80.20 |

| ID | Post-Inbound VPN | Inbound after decrypt | R80.20 |

| iq | Pre-Inbound QoS | Inbound before QoS | R80.20 |

| IQ | Post-Inbound QoS | Inbound after QoS | R80.20 |

| o | Pre-Outbound | Before the outbound FireWall VM | always |

| O | Post-Outbound | After the outbound FireWall VM | always |

|

e oe |

Pre-Outbound VPN |

Outbound before encrypt |

R80.10 R80.20 |

|

E OE |

Post-Outbound VPN |

Outbound after encrypt |

R80.10 R80.20 |

| oq | Pre-Outbound QoS | Outbound before QoS | R80.20 |

| OQ | Post-Outbound QoS | Outbound after QoS | R80.20 |

The “Pre-Encrypt” fw monitor inspection point (e) and the “Post-Encrypt” fw monitor inspection point (E) are new in R80 and above.

Note: It’s true, they only exist on the outbound side for encrypting packets not for decrypting packets on inbound side.

New in R80.20+:

In Firewall kernel (now also SecureXL), each kernel is associated with a key witch specifies the type of traffic applicable to the chain modul.

# fw ctl chain

| Key | Function |

|---|---|

| ffffffff | IP Option Stip/Restore |

| 00000001 | new processed flows |

| 00000002 | wire mode |

| 00000003 | will applied to all ciphered traffic (VPN) |

| 00000000 | SecureXL offloading (new in R80.20+) |

| Content Inspection |

|---|

For more details see article: R80.x Security Gateway Architecture (Content Inspection)

Content inspection is a very complicated process, it is only shown in the example for R80.10 IPS and R80.10 App Classifier. It is also possible for other services. Please refer to the corresponding SK’s. In principle, all content is processed via the Context Management Infrastructure (CMI) and CMI loader and forwarded to the corresponding daemon.

Session-based processing enforces advanced access control and threat detection and prevention capabilities. To do this we assemble packets into a stream, parse the stream for relevant contexts and then security modules inspect the content. When possible, a common pattern matcher does simultaneous inspection of the content for multiple security modules. In multi-core systems this processing is distributed amongst the cores to provide near linear scalability on each additional core.

Security modules use a local cache to detect known threats. This local cache is backed up with real-time lookups of an cloud service. The result of cloud lookups are then cached in the kernel for subsequent lookups. Cloud assist also enhances unknown threat detection and prevention. In particular a file whose signature is not known in a local cache is sent to our cloud service for processing where compute, disk and memory are virtually unlimited. Our sandboxing technology, SandBlast Threat Emulation, identifies threats in their infancy before malware has an opportunity to deploy and evade detection. Newly discovered threats are sent to the cloud database to protect other Check Point connected gateways and devices. When possible, active content is removed from files which are then sent on to the user while the emulation is done.

Passive Streaming Library (PSL) -Packets may arrive out of order or may be legitimate retransmissions of packets that have not yet received an acknowledgment. In some cases a retransmission may also be a deliberate attempt to evade IPS detection by sending the malicious payload in the retransmission. Security Gateway ensures that only valid packets are allowed to proceed to destinations. It does this with Passive Streaming Library (PSL) technology.

- PSL is an infrastructure layer, which provides stream reassembly for TCP connections.

- The gateway makes sure that TCP data seen by the destination system is the same as seen by code above PSL.

This layer handles packet reordering, congestion handling and is responsible for various security aspects of the TCP layer such as handling payload overlaps, some DoS attacks and others. - The PSL layer is capable of receiving packets from the firewall chain and from SecureXL module.

- The PSL layer serves as a middleman between the various security applications and the network packets. It provides the applications with a coherent stream of data to work with, free of various network problems or attacks

- The PSL infrastructure is wrapped with well defined APIs called the Unified Streaming APIs which are used by the applications to register and access streamed data.

Protocol Parsers –

The Protocol Parsers main functions are to ensure compliance to well-defined protocol standards, detect anomalies if any exist, and assemble the data for further inspection by other components of the IPS engine. They include HTTP, SMTP, DNS, IMAP, Citrix, and many others. In a way, protocol parsers are the heart of the IPS system. They register themselves with the streaming engine (usually PSL), get the streamed data, and dissect the protocol.

The protocol parsers can analyze the protocols on both Client to Server (C2S) and Server to Client (S2C) directions. The outcome of the protocol parsers are contexts. A context is a well defined part of the protocol, on which further security analysis can be made. Examples of such contexts are HTTP URL, FTP command, FTP file name, HTTP response, and certain files.

Context Management Infrastructure (CMI) and Protections – The Context Management Infrastructure (CMI) is the “brain” of the content inspection. It coordinates different components, decides which protections should run on a certain packet, decides the final action to be performed on the packet and issues an event log.

CMI separates parsers and protections. Protection is a set of signatures or/and handlers, where

- Signature – a malicious pattern that is searched for

- Handler – INSPECT code that performs more complex inspection

CMI is a way to connect and manage parsers and protections. Since they are separated, protections can be added in updates, while performance does not depend on the number of active protections. Protections are usually written per protocol contexts – they get the data from the contexts and validate it against relevant signatures Based on the IPS policy, the CMI determines which protections should be activated on every context discovered by a protocol parser. If policy dictates that no protections should run, then the relevant parsers on this traffic are bypassed in order to improve performance and reduce potential false positives. When a protection is activated, it can decide whether the given packet or context is OK or not. It does not decide what to do with this packet. The CMI is responsible for the final action to be performed on the packet, given several considerations.

The considerations include:

- Activation status of the protection (Prevent, Detect, Inactive)

- Exceptions either on traffic or on protection

- Bypass mode status (the software fail open capability)

- Troubleshooting mode status

- Are we protecting the internal network only or all traffic

CMI Loader – collects signatures from multiple sources (e.g. IPS, Application Control,…) and compiles them together into unified Pattern Matchers (PM) (one for each context – such as URL, Host header etc.).

Pattern Matcher -The Pattern Matcher is a fundamental engine within the new enforcement architecture.

- Pattern Matcher quickly identifies harmless packets, common signatures inmalicious packets, and does a second level analysis to reduce false positives.

- Pattern Matcher engine provides the ability to find regular expressions on a stream of data using a two tiered inspection process.

UP Manager – The UP Manager controls all interactions of the components and interfaces with the Context Management Infrastructure (CMI) Loader, the traffic director of the CMI. The UP Manager also has a list of Classifiers that have registered for “first packets” and uses a bitmap to instruct the UP Classifier to execute these Classifier Apps to run on the packet. The “first packets” arrive directly from the CMI. Parsing of the protocol and streaming are not needed in this stage of the connection. For “first packets” the UP Manager executes the rule base.

Classifier – When the “first packet” rule base check is complete Classifiers initiate streaming for subsequent packets in the session. The “first packet” rule base check identifies a list of rules that possibly may match and a list of classifier objects (CLOBs) that are required to complete the rule base matching process. The Classifier reads this list and generates the required CLOBs to complete the rule base matching. Each Classifier App executes on the packet and tells the result of the CLOB to the UP Manager. The CMI then tells the Protocol Parser to enable streaming.

In some cases Classifier Apps do not require streaming, e.g. the first packet information is sufficient. Then the rule base decision can be done on the first packet.

- Dynamic Objects

- Domain Objects

- Only the firewall is enabled

On subsequent packets the Classifier can be contacted directly from the CMI using the CMI Loader infrastructure, e.g. when the Pattern Matcher has found a match it informs the CMI it has found application xyz. The CMI Loader passes this information to the Classifier. The Classifier runs the Classification Apps to generate CLOBs required for Application Control and sends the CLOBs to the Observer.

Observer – The Observer decides if enough information is known to publish a CLOB to the security policy. CLOBs are observed in the context of their transaction and the connection that the transaction belongs to. The Observer may request more CLOBs for a dedicated packet from the Classifier or decides that it has sufficient information about the packet to execute the rule base on the CLOB, e.g. if a file type is needed for Content Awareness and the gateway hasn’t yet received the S2C response containing the file. Executing the rule base on a CLOB is called “publishing a CLOB”. The Observer may wait to receive more CLOBs that belong to the same transaction before publishing the CLOBs.

Security Policy – The Security Policy receives the CLOB published by the Observer. The CLOB includes a description of the Blade it belongs to so that matching can be performed on a column basis. The security policy saves the current state on the transaction Handle; either to continue the inspection or final match.

The first packets are received directly from the UP Manager. Subsequent packets are received by the rule base from the Observer.

Handle – Each connection may consist of several transactions. Each transaction has a Handle. Each Handle contains a list of published CLOBs. The Handle holds the state of the security policy matching process. The Handle infrastructure component stores the rule base matching state related information.

Subsequent Packets – Subsequent packets are handled by the streaming engine. The streaming engine notifies the Classifier to perform the classification. The Classifier will notify the UP Manager about the performed classification and pass the CLOBs to the Observer. The CLOBs will then be received by the Observer that will need to wait for information from the CMI. The CMI sends the information describing the result of the Protocol Parser and the Pattern Matcher to the Classifier. The Classifier informs the UP Manager and sends the CLOB to the Observer. The UP Manager then instructs the Observer to publish the CLOBs to the Rule Base.

The Rule Base is executed on the CLOBs and the result is communicated to the UP Manager. The CLOBs and related Rule Base state are stored in the Handle. The UP Manager provides the result of the rule base check to the CMI that then decides to allow or to drop the connection. The CMI generates a log message and instructs the streaming engine to forward the packets to the outbound interface.

Content Awareness (CTNT) – is a new blade introduced in R80.10 as part of the new Unified Access Control Policy. Using Content Awareness blade as part of Firewall policy allows the administrator to enforce the Security Policy based on the content of the traffic by identifying files and its content. Content Awareness restricts the Data Types that users can upload or download.

Content Awareness can be used together with Application Control to enforce more interesting scenarios (e.g. identify which files are uploaded to DropBox).

| References |

|---|

SecureKnowledge: SecureXL

SecureKnowledge: NAT Templates

SecureKnowledge: VPN Core

SecureKnowledge: CoreXL

SecureKnowledge: CoreXL Dynamic Dispatcher in R77.30 / R80.10 and above

SecureKnowledge: Application Control

SecureKnowledge: URL Filtering

SecureKnowledge: Content Awareness (CTNT)

SecureKnowledge: IPS

SecureKnowledge: Anti-Bot and Anti-Virus

SecureKnowledge: Threat Emulation

SecureKnowledge: Best Practices – Security Gateway Performance

SecureKnowledge: MultiCore Support for IPsec VPN in R80.10 and above

Download Center: R80.10 Next Generation Threat Prevention Platforms

Download Center: R77 Security Gateway Packet Flow

Download Center: R77 Security Gateway Architecture

Support Center: Check Point Security Gateway Architecture and Packet Flow

Checkmates: Check Point Threat Prevention Packet Flow and Architecture

Checkmates: fw monitor inspection point e or E

Infinity NGTP architecture

Security Gateway Packet Flow and Acceleration – with Diagrams

R80.x Security Gateway Architecture (Content Inspection)

| Questions and Answers |

|---|

Q: Why this diagram with SecureXL and CoreXL?

A: I dared to map both worlds of CoreXL and SecureXL in a diangram. This is only possible to a limited extent, as these are different technologies. It’s really an impossible mission. Why!

– CoreXL is a mechanism to assign, balance and manage CPU cores. CoreXL SND makes a decision to “stick” particular connection going through to a specific FWK instance.

– SecureXL certain connections could avoid FW path partially (packet acceleration) or completely (acceleration with templates)

Q: Why both technologies in one flowchart?

A: There are both technologies that play hand in hand. The two illustrations become problematic, e.g. in the Medium Path.

Q: Why in the Medium Path?

A: When Medium Path is available, TCP handshake is fully accelerated with SecureXL. Rulebase match is achieved for the first packet through an existing connection acceleration template. SYN-ACK and ACK packets are also fully accelerated. However, once data starts flowing, to stream it for Content Inspection, the packets will be now handled by a FWK instance. Any packets containing data will be sent to FWK for data extraction to build the data stream. RST, FIN and FIN-ACK packets once again are only handled by SecureXL as they do not contain any data that needs to be streamed.

Q: What is the point of this article?

A: To create an overview of both worlds with regard to the following innovations in R80.x:

– new fw monitor inspection points in R80 (e and E)

– new MultiCore VPN with dispatcher

– new UP Manager in R80

Q: Why is there the designation “Logical Packet Flow”?

A: Since the logical flow in the overview differs from the real flow. For example, the medium path is only a single-logical representation of the real path. This was necessary to map all three paths (F2F, SXL, PXL) in one image. That is why the name “Logical Packet Flow”.

Q: What’s the next step?

A: I’m thinking about how to make the overview even better.

Q: Wording?

A: It was important for me that the right terms from Check Point were used. Many documents on the Internet use the terms incorrectly. Therefore I am grateful to everyone who still finds wording errors here.

Q: What’s the GA version?

A: This version has approved by Check Point representative, and we agreed that this should be the final version.

| Versions |

|---|

GA Version R80.20:

1.2a – article update to R80.20 (16.11.2018)

1.2b – update inspection points id, iD and more (19.11.2018)

1.2c – update maximal number of CoreXL IPv4 FW instances (20.11.2018)

1.2d – update R80.20 new functions (05.11.2018)

1.2e – bug fix (06.01.2019)

1.2f – update fw monitor inspection points ie/ IE (23.01.2019)

1.2g – new design (18.03.2019)

GA Version R80.10:

1.1b – final GA version (08.08.2018)

1.1c – change words to new R80 terms (08.08.2018)

1.1d – correct a mistak with SXL and “Accelerated path” (09.08.2018)

1.1e – bug fixed (29.08.2018)

1.1f – QoS (24.09.2018)

1.1g – correct a mistak in pdf (26.09.2018)

1.1h – add PSLXL and CPASXL path in R80.20 (27.09.2018)

1.1i – add “Medium Streaming Path” and “Inline Streaming Path” in R80.20 (28.09.2018)

1.1j – add “new R80.20 chain modules” (22.10.2018)

1.1k – bug fix chain modules (04.11.2018)

1.1l – add “chaptures” (10.11.2018)

1.1m – add R80.20 fw monitor inspection points “oe” and “OE” (17.12.2018)

EA Version:

1.0a – final version (28.07.2018)

1.0c – change colors (28.07.2018)

1.0d – add content inspection text (29.07.2018)

1.0e – add content inspection drawing (29.07.2018)

1.0f – update links (29.07.2018)

1.0g – update content inspection drawing flows and action (30.07.2018)

1.0h – change SecureXL flow (30.07.2018)

1.0i – correct SecureXL packet flow (01.08.2018)

1.0j – correct SecureXL names and correct “fw monitor inspection points” (02.08.2018)

1.0k – add new article “Security Gateway Packet Flow and Acceleration – with Diagrams” from 06.08.2018 to “References and links” (06.08.2018)

1.0l – add “Questions and Answers“ (07.08.2018)

Copyright by Heiko Ankenbrand 1994 – 2019